Random Dot Product Graphs as Dynamical Systems: Limitations and Opportunities

Notes

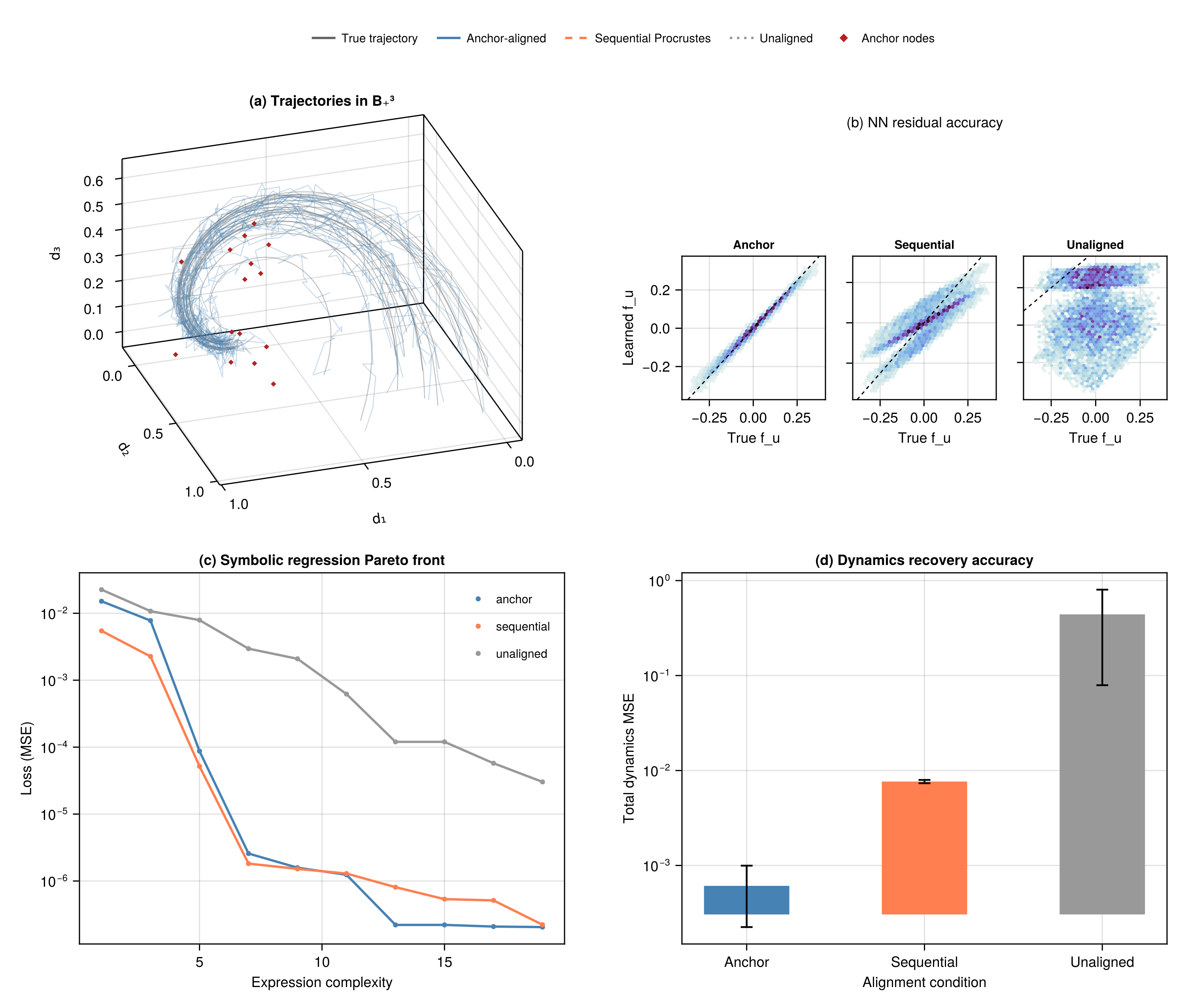

Learning dynamics from time-varying latent representations is a goal shared across many domains, from neural connectomics to social network analysis, yet it is fundamentally complicated by the non-identifiability inherent in latent variable models. When the likelihood is invariant under a symmetry group, some latent motions are invisible, and local estimates may not stitch into globally consistent trajectories. How should one formalize these obstructions, and what tools can quantify them? We address these questions concretely within the framework of Random Dot Product Graphs (RDPGs), where network snapshots are generated from latent positions evolving under unknown dynamics and the O(d) rotational symmetry is explicit. We identify three fundamental obstructions to recovering the governing differential equations: gauge freedom from rotational non-identifiability, realizability constraints from the manifold structure of the probability matrix, and trajectory recovery artifacts introduced by spectral embedding. We develop a geometric framework based on principal fiber bundles that formalizes these obstructions and reveals their interplay. Connections and curvature on the bundle quantify gauge velocity and explain when local alignment fails to globalize: polynomial dynamics have trivial holonomy, while Laplacian dynamics satisfy a proved non-commutativity criterion for nontrivial holonomy (full restricted SO(2) in dimension two; conditional in higher dimensions). Cramér-Rao bounds reveal that the spectral gap controlling curvature simultaneously controls Fisher information, so geometric and statistical difficulty are inextricable. An identifiability principle shows that symmetric dynamics cannot absorb skew-symmetric gauge contamination, yet significant practical obstacles remain in finite samples. We frame the gap between identifiability and constructive recovery as an open challenge.

References

No references yet.

Referenced by

- A central limit theorem for an omnibus embedding of multiple random graphs and implications for multiscale network inference

- A limit theorem for scaled eigenvectors of random dot product graphs

- A statistical interpretation of spectral embedding: the generalised random dot product graph

- Asymptotically efficient estimators for stochastic blockmodels: the naive MLE, the rank-constrained MLE, and the spectral estimator

- Bayesian Inference for Generalized Random Dot Product Graphs with Scaled Mixtures of Normals

- Beyond species: why ecological interaction networks vary through space and time

- Curvature of the manifold of fixed-rank positive-semidefinite matrices endowed with the Bures–Wasserstein metric

- Efficient estimation for random dot product graphs via a one-step procedure

- Euclidean Mirrors and Dynamics in Network Time Series

- Exploring the evolutionary signature of food webs' backbones using functional traits

- Food web reconstruction through phylogenetic transfer of low-rank network representation

- Foundations of Differential Geometry

- Geometric deep learning: Grids, groups, graphs, geodesics, and gauges

- Gradient-Based Spectral Embeddings of Random Dot Product Graphs

- Inference for multiple heterogeneous networks with a common invariant subspace

- Latent geometry emerging from network-driven processes

- Learning unknown ODE models with Gaussian processes

- Low-rank optimization on the cone of positive semidefinite matrices

- Minimax rates for latent position estimation in the generalized random dot product graph

- Optimal Bayesian estimation for random dot product graphs

- Optimization Algorithms on Matrix Manifolds

- Quotient geometry with simple geodesics for the manifold of fixed-rank positive-semidefinite matrices

- Random dot product graph models for social networks

- Random perturbations of dynamical systems

- SE-Sync: A certifiably correct algorithm for synchronization over the special Euclidean group

- Spectral embedding for dynamic networks with stability guarantees

- Statistical inference on random dot product graphs: a survey

- SymbolicRegression.jl: Distributed High-Performance Symbolic Regression in Julia

- The eigenvalues of stochastic blockmodel graphs

- The fundamental equations of a submersion

- The two-to-infinity norm and singular subspace geometry with applications to high-dimensional statistics

- Three-dimensional structure determination from common lines in cryo-EM by eigenvectors and semidefinite programming

- Universal differential equations for scientific machine learning